⚠️ Disclosure: This post contains affiliate links. We may earn a commission at no extra cost to you.

⚠️ Disclosure: This post contains affiliate links. We may earn a commission at no extra cost to you.

Understanding robots.txt and sitemaps is crucial for website SEO. These tools help search engines crawl and index your site efficiently.

Imagine having a website but nobody can find it. That’s where robots. txt and sitemaps come in. These files guide search engines on what to crawl and what to ignore. Robots. txt helps control access to your site’s parts, while sitemaps list the pages you want indexed.

Together, they ensure your site is search-engine friendly. This improves visibility and ranking. In this blog, we’ll explore how these tools work, why they are important, and how to create them. Let’s dive in and make your website more discoverable!

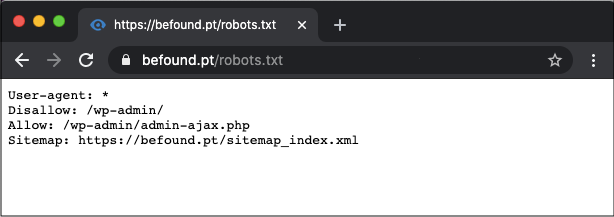

Understanding how search engines navigate your site is crucial. One of the primary tools to guide them is the robots.txt file. This small but powerful text file can help you control which parts of your website are accessible to search engine crawlers.

The robots.txt file is a text document in your website’s root directory. It tells search engine crawlers which pages they can or cannot visit. Think of it as a set of instructions for search engines.

| Attribute | Description |

|---|---|

| User-agent | Specifies which crawler the rule applies to. |

| Disallow | Defines the pages or directories that should not be crawled. |

| Allow | Specifies the pages or directories that can be crawled. |

The purpose of robots.txt is to manage search engine activity on your site. It can help:

The importance of robots.txt lies in its ability to improve your site’s SEO health. By guiding crawlers properly, you ensure that the most relevant content is indexed. This helps improve your website’s search engine ranking.

User-agent:

Disallow: /private/

Allow: /public/

In the above code, all user-agents are instructed to avoid the /private/ directory but are allowed to access the /public/ directory.

Proper use of robots.txt can make a big difference. It is a simple yet effective way to manage how search engines interact with your site.

Sitemaps play a crucial role in search engine optimization (SEO). They help search engines understand your website’s structure. This improves your chances of being indexed properly. Let’s explore what sitemaps are and their different types.

A sitemap is a file that lists the pages of your website. This file informs search engines about the organization of your site. Think of it as a roadmap for search engines.

There are two main formats of sitemaps:

Both formats help improve the user experience and site indexing.

There are different types of sitemaps based on their purpose:

| Type | Description |

|---|---|

| XML Sitemap | List URLs for a site, helping search engines crawl efficiently. |

| HTML Sitemap | Aids users in navigating a website. |

| Video Sitemap | Specifies information about video content on your site. |

| Image Sitemap | Provides data about images on a website. |

Each type of sitemap serves a unique purpose. They all contribute to the overall health of your website. Understanding and using these sitemaps can enhance your SEO efforts.

Creating an effective robots.txt file is crucial for guiding search engines. It helps them understand which pages to crawl and index. This file can improve your website’s SEO and protect sensitive information. Knowing its basic syntax and structure ensures proper implementation.

The robots.txt file resides in your website’s root directory. It uses a simple syntax to communicate with web crawlers. Each rule starts with a user-agent line. This specifies which crawler the rule applies to. The next line contains the directive. It tells the crawler what to do.

A basic example looks like this:

User-agent:

Disallow: /private/

In this example, all crawlers are disallowed from accessing the /private/ directory. The asterisk () means the rule applies to all crawlers.

Several common directives help you control crawler behavior. The Disallow directive blocks access to specified paths. For example:

Disallow: /admin/

This blocks crawlers from accessing the /admin/ directory. The Allow directive permits access to specific paths. It is useful within disallowed directories. For example:

Allow: /admin/public/

This allows access to the /admin/public/ directory, even if /admin/ is disallowed. The Sitemap directive informs crawlers about the location of your sitemap. It looks like this:

Sitemap: http://www.example.com/sitemap.xml

Including a sitemap directive ensures crawlers find your sitemap easily. It helps them index your site more efficiently.

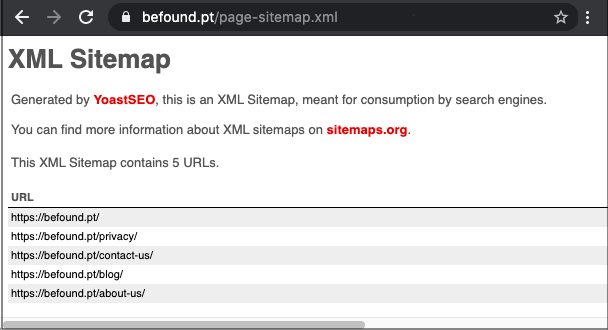

A comprehensive sitemap is essential for a successful website. It helps search engines find and index your web pages. This improves your site’s visibility and ranking. Let’s explore the key elements and best practices for building comprehensive sitemaps.

To create an effective sitemap, include the following essential elements:

Here is an example of a basic XML sitemap:

xml version="1.0" encoding="UTF-8"?

http://www.example.com/

2023-10-01

monthly

1.0

http://www.example.com/about/

2023-09-15

yearly

0.8

Follow these best practices to ensure your sitemap is effective:

By following these practices, your sitemap will be a valuable tool. It will help search engines understand and index your site more effectively.

Integrating robots.txt with sitemaps is essential for effective website indexing. This integration guides search engines on which pages to crawl. It also helps in optimizing your site’s visibility in search results.

Ensure the robots.txt file and sitemap are compatible. The robots.txt file should reference the sitemap. This tells search engines where to find the sitemap. Use the following code to include the sitemap in the robots.txt file:

User-agent:

Disallow: /private/

Sitemap: https://www.example.com/sitemap.xml

Always test the robots.txt file for errors. Use Google’s Robots.txt Tester. It helps check if the file blocks important pages. Confirm that the sitemap URL is correct.

Mistakes can affect site indexing. Avoid these common errors:

Keep the robots.txt file simple. Complex rules can confuse search engines. Regularly review and update the file.

| Mistake | Solution |

|---|---|

| Outdated Sitemap URL | Update robots.txt with the correct sitemap URL |

| Blocking Important Pages | Review and remove unnecessary disallow rules |

| Incorrect Formatting | Follow standard robots.txt syntax rules |

| Not Testing | Use tools to test and verify the file |

The Robots.Txt file plays a crucial role in SEO strategies. It offers several benefits that can enhance your website’s performance and visibility. Understanding these benefits can help you optimize your site effectively.

One major benefit of Robots.Txt is the control it offers over web crawlers. You can specify which parts of your site should not be crawled. This helps in keeping sensitive or irrelevant pages out of search engine indexes. It allows search engines to focus on more important pages. By doing this, you enhance your site’s overall SEO.

Using Robots.Txt can also improve your site’s performance. By blocking unnecessary pages from being crawled, you save your server’s resources. This can lead to faster load times and better user experience. Faster websites often rank higher in search engine results. Better performance can also reduce bounce rates. This is another positive for your SEO efforts.

Sitemaps are essential tools for SEO. They help search engines understand your website structure. This improves the chances of better indexing. A well-structured sitemap can significantly boost your site’s visibility. Here we will explore the SEO benefits of sitemaps.

Search engines use crawlers to index web pages. A sitemap makes this process faster. It lists all your site pages in a structured manner. This helps crawlers find and index your content efficiently. It also ensures that no pages are missed during indexing.

Crawl efficiency can be improved by:

With a clear sitemap, search engines can prioritize crawling important pages. This reduces the risk of missing out on key content. It also minimizes the time spent on irrelevant pages.

A sitemap helps boost indexation by providing a clear roadmap of your site. It ensures that all pages, including deeper links, are discovered. This is especially important for new or large websites.

Key benefits of boosted indexation include:

Sitemaps also help with indexing multimedia content. This includes images, videos, and other non-text content. By including these in your sitemap, you ensure they are indexed. This can improve the overall visibility and ranking of your site.

In summary, sitemaps are crucial for SEO. They improve crawl efficiency and boost indexation. This leads to better visibility and higher rankings in search engines.

Credit: www.dopinger.com

Managing Robots.Txt and Sitemaps is crucial for website optimization. Proper management ensures search engines crawl and index your site correctly. Using the right tools can simplify this process and enhance your site’s visibility.

Various tools help manage Robots.Txt and Sitemaps efficiently. Below are some of the most popular ones:

robots.txt files and sitemaps.robots.txt and sitemaps.robots.txt and sitemaps.Managing Robots.Txt and Sitemaps effectively requires some best practices. Follow these tips for better results:

robots.txt and sitemaps updated with new content.robots.txt file using Google Search Console.robots.txt file uses simple and correct syntax.Using these tools and tips can improve your site’s performance and visibility. Proper management of Robots.Txt and Sitemaps is key to successful SEO.

Case studies and success stories provide valuable insights into how businesses have effectively used robots.txt and sitemaps to improve their website’s SEO performance. By analyzing real-world examples, we can uncover practical lessons and strategies that can be applied to your own website.

Let’s explore some real-world examples of companies that have successfully utilized robots.txt and sitemaps:

| Company | Strategy | Outcome |

|---|---|---|

| Company A | Optimized their robots.txt to block duplicate content | Improved crawl efficiency and ranking |

| Company B | Implemented a sitemap to index new content quickly | Increased organic traffic by 30% |

| Company C | Combined a dynamic sitemap with effective robots.txt rules | Enhanced site visibility and user experience |

From these examples, we can draw several key lessons:

These strategies demonstrate how effective robots.txt and sitemaps can be in enhancing SEO performance. By learning from these real-world examples, you can apply similar tactics to improve your own website’s visibility and traffic.

Credit: www.woorank.com

Understanding Robots.txt and Sitemaps is crucial for SEO. These tools help search engines index your site effectively. This section will recap key points and suggest future strategies.

Implement these strategies to keep your site optimized. Regular maintenance of Robots.txt and Sitemaps is key for long-term success.

Credit: www.aemtutorial.info

A robots. txt file is a text file used to control web crawlers. It instructs search engines which pages to crawl or not.

A sitemap is important because it helps search engines understand your website structure. It ensures all pages are indexed.

To create a robots. txt file, use a plain text editor. Specify rules for web crawlers and upload it to your website’s root directory.

Yes, you can have multiple sitemaps. This is especially useful for large websites. Use a sitemap index file to list all sitemaps.

Understanding robots. txt and sitemaps boosts your website’s search engine visibility. These tools guide search engines to your site’s important content. Implementing them properly enhances your site’s SEO performance. Keep your robots. txt file updated and your sitemap accurate. This ensures search engines index your site correctly.

Regularly check for errors and fix them promptly. This keeps your site friendly for both users and search engines. Remember, clear and organized navigation improves user experience. Investing time in these areas pays off in better search rankings and more visitors.

Sofia Grant is a business efficiency expert with over a decade of experience in digital strategy and affiliate marketing. She helps entrepreneurs scale through automation, smart tools, and data-driven growth tactics. At TaskVive, Sofia focuses on turning complex systems into simple, actionable insights that drive real results.

Affiliate Marketer | SEO Specialist | Blogger at Elite Global Marketing Agency

Ms.Sultana brings over 16 years of expertise working with global Clients by providing different skills and Services. For the last 5 years working as an Affiliate marketer, specializing in high-ticket campaigns that drive exponential growth. She holds a degree in Computer Science and Engineering as well as achieved many more skills certificates from different institute/academies/Platform. As part of the Elite Global Marketing team, Sultana has helped clients generate millions in revenue through strategic partnerships, innovative funnels, and data-driven insights. She’s passionate about empowering businesses to scale by connecting them with the right affiliate opportunities.

Explore our resources or connect with us on LinkedIn to stay ahead in affiliate marketing.

Side hustles for students online: 15 proven, flexible ways to earn money part-time from anywhere, start today and boost your income without quitting school.

Practical tips to make money in college without a job using side hustles, freelancing, passive income, and campus hacks to boost your budget fast.

Learn how can i earn $1000 per day as a student online with proven, legal side hustles, workflows, and tools you can start this week.